From Phantom Lungs to AI Co-Pilots: OASIS Hub at the Science of Surgery

Researchers from the OASIS Hub demonstrated how multimodal imaging and intelligent systems are reshaping the operating theatre

Infrared cameras, robotic imaging systems, and an AI surgical co-pilot - these were some of the technologies showcased by OASIS Hub researchers at the UCL Hawkes Institute's flagship public event.

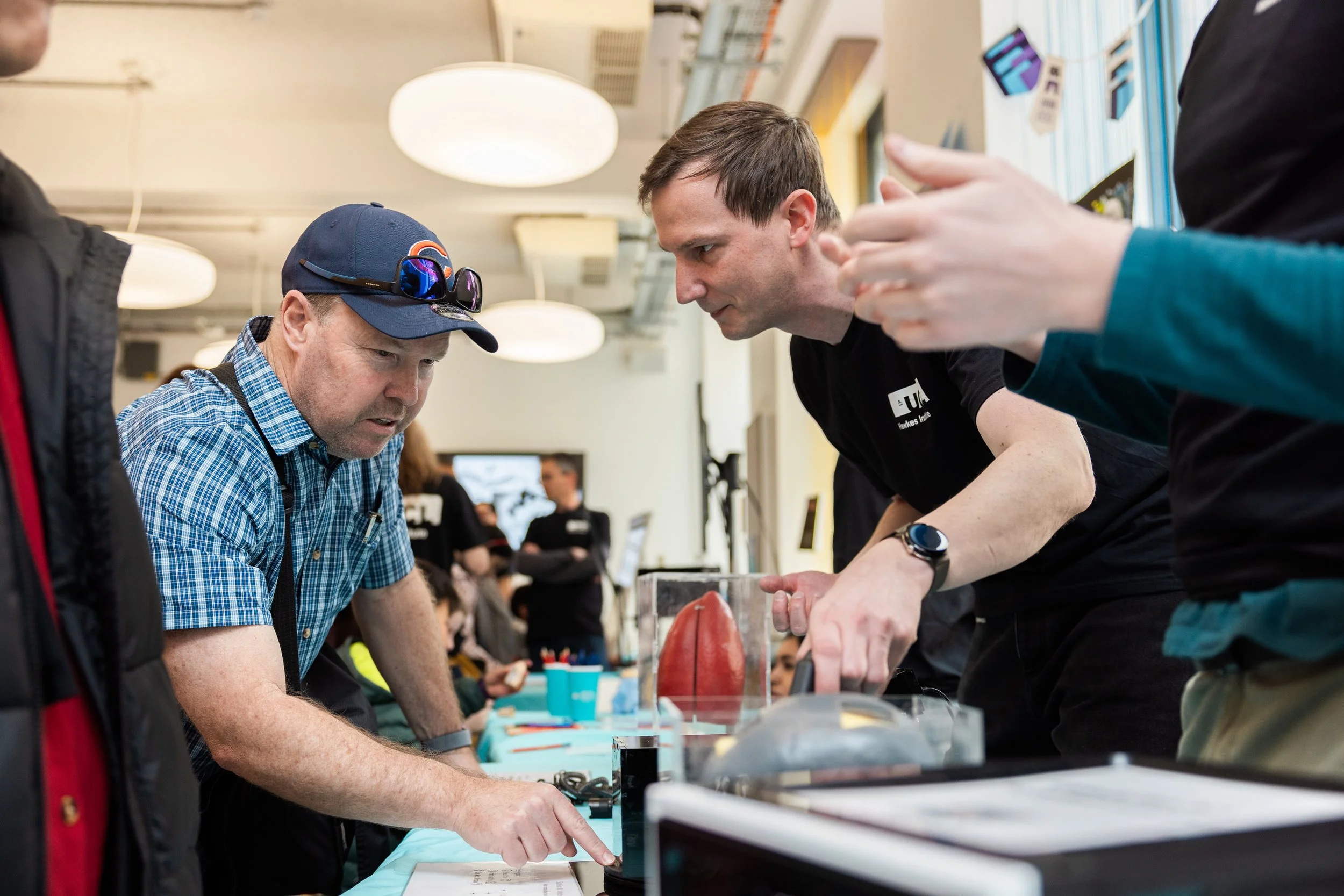

On Friday 10 April, more than 500 visitors came to Charles Bell House to explore the latest advancements in medical imaging, computer software and surgical tools.

As part of the event, OASIS Hub researchers Dr Erwin Alles, Dr Zhehua Mao and Alex Saikia showcased demonstrations of their latest work.

Dr Erwin Alles alongside his Multimodal Interventional Sensing and Imaging (MISI) research team put together three interactive stations conveying the fundamental principles underlying multimodal imaging. These illustrated why the integration of complementary imaging techniques is essential for comprehensive clinical assessment: no single modality provides a complete diagnostic picture, and the strengths of each technique serve to compensate for the limitations of the others.

The first station employed a lung phantom to show that visual inspection under standard illumination is diagnostically insufficient, but that imaging across alternative wavelengths of light can reveal pathological features invisible to the naked eye — underscoring the principle that modality selection must be matched to the tissue property or pathological feature of interest.

The second station used an opaque enclosure to simulate the challenge of imaging through overlying tissue, demonstrating that techniques such as infrared imaging can penetrate optically opaque barriers in a manner analogous to the transmission of non-visible electromagnetic or acoustic energy through biological tissue.

The third station built upon the second by presenting a scenario in which infrared imaging alone yielded an incomplete diagnosis, identifying regions of interest but failing to characterise them definitively. Visitors were then invited to perform manual palpation through apertures in a 3D-printed skull model to detect localised increases in tissue stiffness - simulating the diagnostic complementarity of combined modalities such as CT and elastography.

Another MISI activity was also delivered by UG student Marva Mukhtar. Marva developed a crafting activity where visitors were first briefly instructed on the various medical imaging technologies, and then tasked with decorating a "patient" (various levels of Russian nesting dolls, where each size corresponds to a specific imaging modality) to showcase what can, or cannot, be seen by that modality - and/or what the benefits or risks are.

Dr Zhehua Mao presented Surg-CoPilot, an AI system developed by the Surgical Robot Vision research group to support surgeons during complex pituitary tumour resections.

Through a live demonstration, Zhehua showed how the system provides real-time intraoperative guidance by identifying regions of the skull base that can be safely accessed, while flagging high-risk areas where critical anatomical structures lie.

Acting as an intelligent co-pilot, Surg-CoPilot is designed to reduce the risk of surgical complications and support better patient outcomes in one of neurosurgery's most technically demanding procedures.

Alex Saikia showcased a robotic hyperspectral-RGB laparoscopic system designed to support rapid intraoperative data acquisition for the development of surgical guidance algorithms.

Through a live interactive demonstration, Alex showed how surgeons commonly use fluorescent contrast agents to aid perfusion assessment and region identification. The research directly addresses the limitations associated with fluorescent dyes by leveraging hyperspectral imaging for contrast-free guidance.

As hyperspectral imaging is relatively new compared to the RGB cameras now standard in robotic and hand-held minimally invasive surgery, data collected by the system can be used to assess whether hyperspectral imaging can outperform RGB imaging across widely studied downstream tasks such as 3D reconstruction.